直接去cmd下载

下载好之后打开pycharm

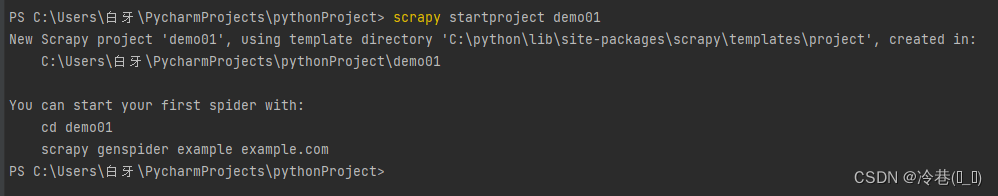

在命令行里面创建新项目

这个demo01是项目的名字,这个可以随便写的。 然后回车

这样就是创建好了。可以cd到那个项目,但是这里直接在file里面打开就行了!

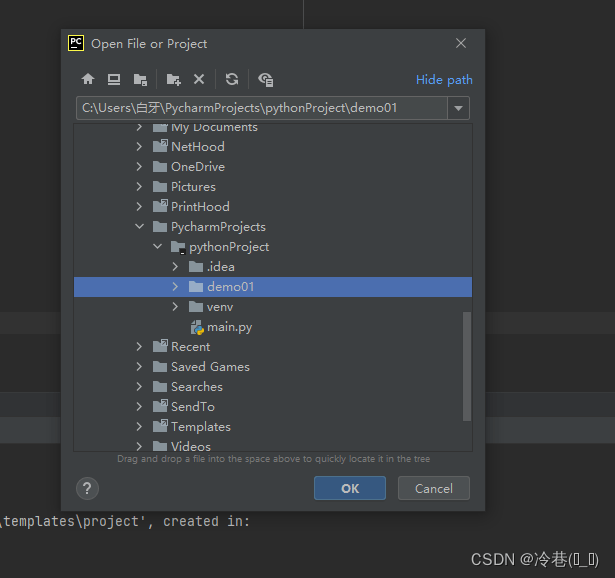

file-open-demo1

点ok然后点this windows,就是在当前窗口打开,关掉之后再打开还是这个项目,如果点new windows的话就是新窗口打开,重启后是之前的项目。

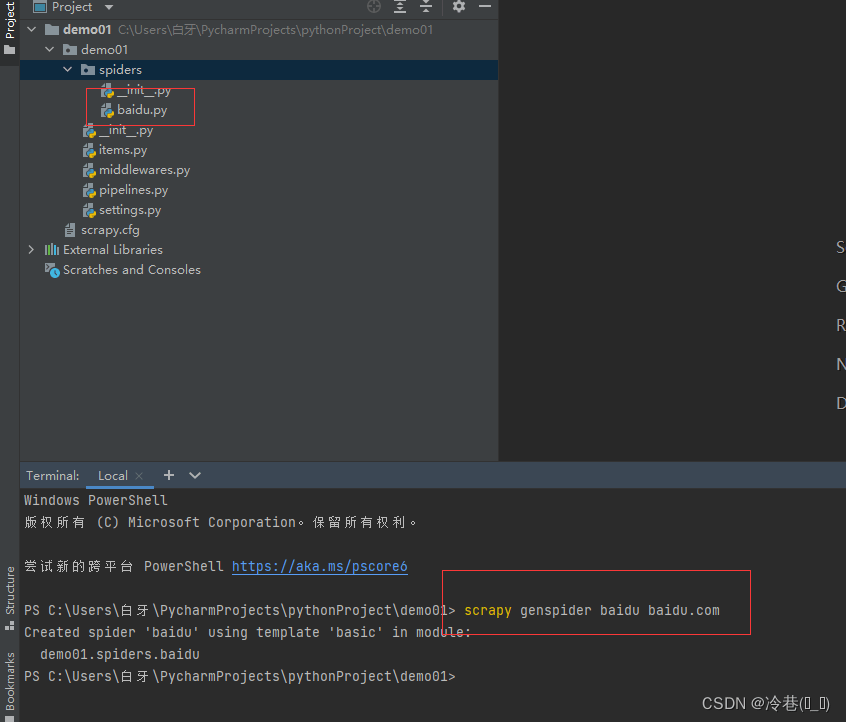

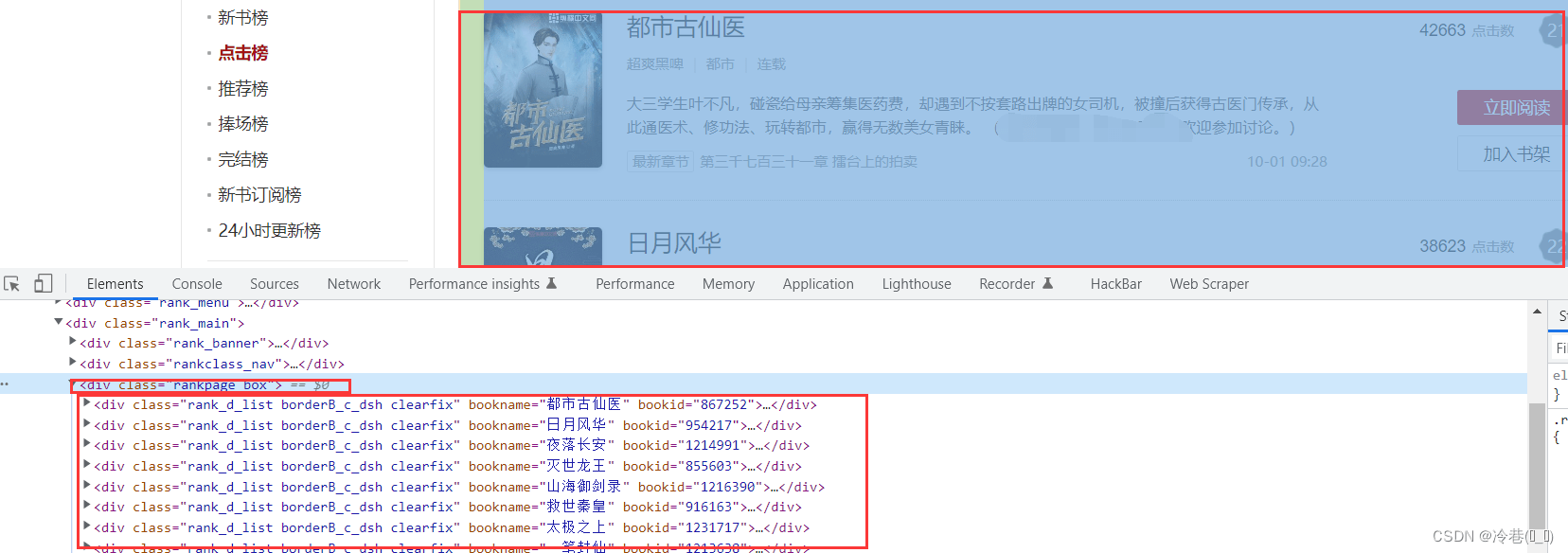

这个是创建一个spider,

scrapy genspider baidu baidu.com

这里的第一个baidu是名字,然后跟着域名。创建完成后在spiders里面会发现多了一个baidu.py。然后我们进入这个py

name就是名字,项目名,allowed_domains呢就是域名,start_urls是开始的地方,想从哪里开始爬就从这里修改就行了,下面的parse()是解析的

# Scrapy settings for demo01 project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = 'demo01' # 爬虫项目名

SPIDER_MODULES = ['demo01.spiders']

NEWSPIDER_MODULE = 'demo01.spiders'

# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = 'Mozlla/5.0' # user_agent这个可以改,可以在这里设置,也可以在下面设置

# Obey robots.txt rules

ROBOTSTXT_OBEY = False # 是否遵循robots协议,一定要设置为false

# Configure maximum concurrent requests performed by Scrapy (default: 16)

CONCURRENT_REQUESTS = 8 # 最大并发量,默认为16 这个要改

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

DOWNLOAD_DELAY = 1 # 下载延迟请求,每隔多长时间发送一个请求(减低数据爬取频率)

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

#COOKIES_ENABLED = False # 是否启用cookies,默认禁止,取消注释就是开启

# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False

# Override the default request headers:

# 请求头,类似于requests.get()方法中的headers参数

DEFAULT_REQUEST_HEADERS = {

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language': 'en',

'User-Agent': 'Mozilla/5.0'

}

# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'demo01.middlewares.Demo01SpiderMiddleware': 543,

#}

# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'demo01.middlewares.Demo01DownloaderMiddleware': 543,

#}

# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}

# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

#ITEM_PIPELINES = {

# 'demo01.pipelines.Demo01Pipeline': 300,

#}

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

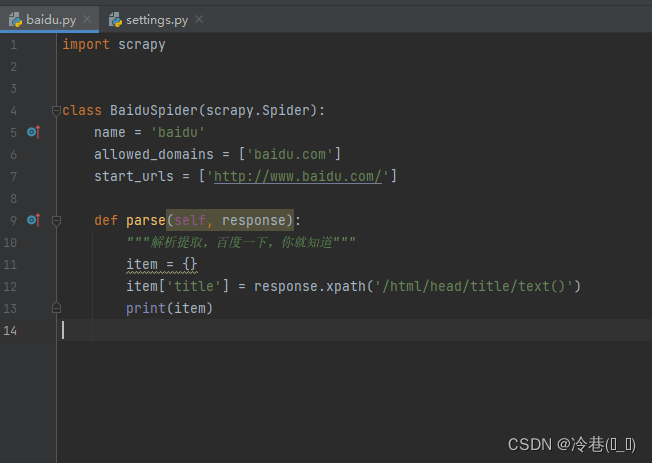

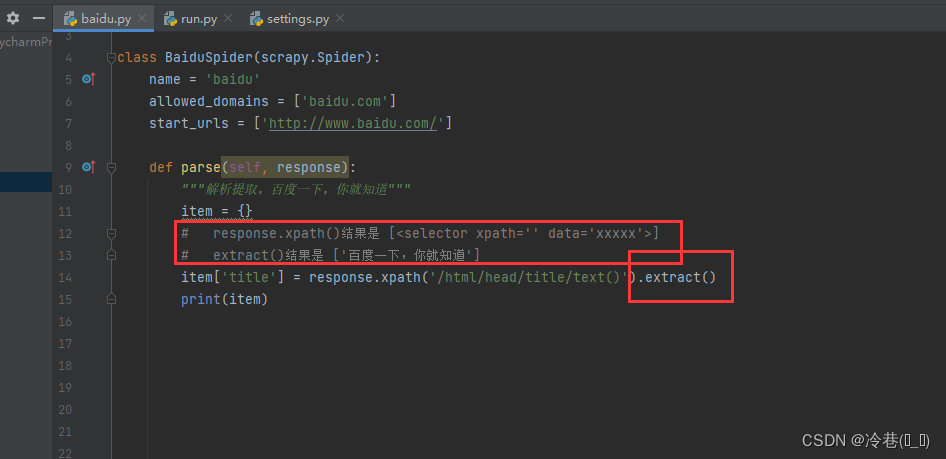

回到baidu.py

然后在pycharm里面的终端运行项目

上面是检查你的scrapy的配置,版本之类的东西,然后

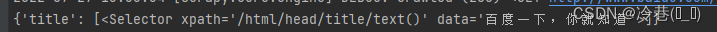

发现这个,提取出来是字典。

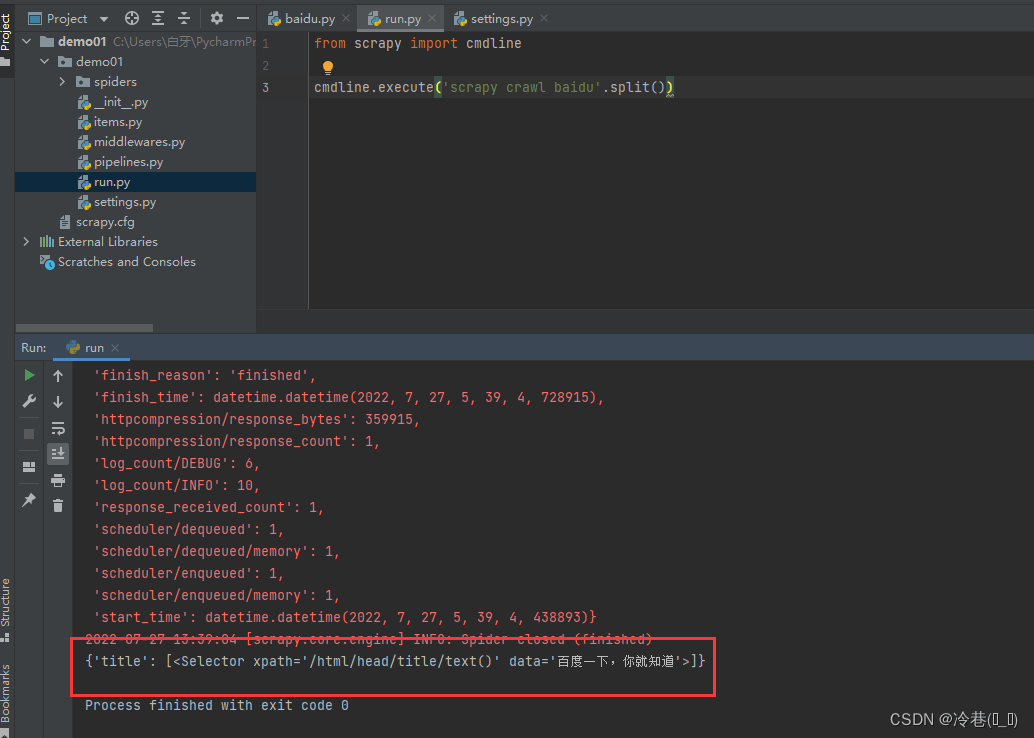

在这里创建一个run.py出来

输入

from scrapy import cmdline

cmdline.execute('scrapy crawl baidu'.split())

然后右键运行

简单明了,颜色都不一样,直接可以看到自己想要爬取的内容。

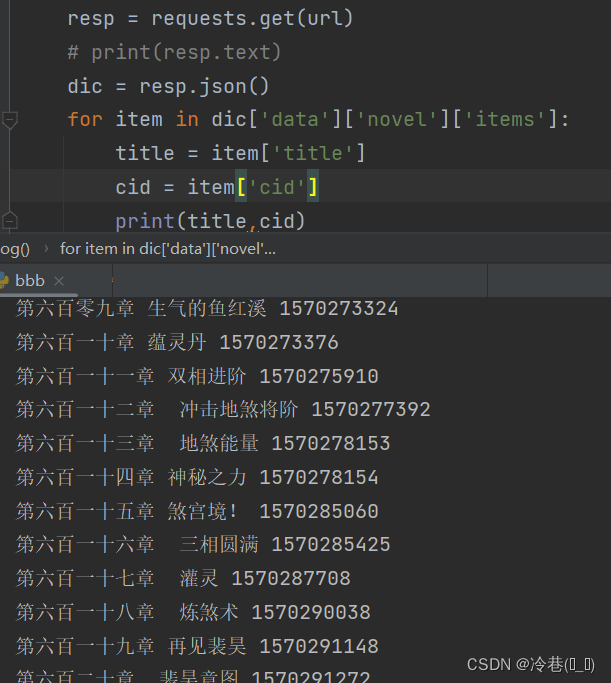

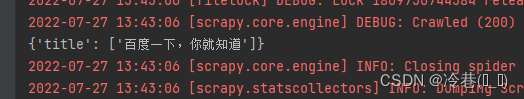

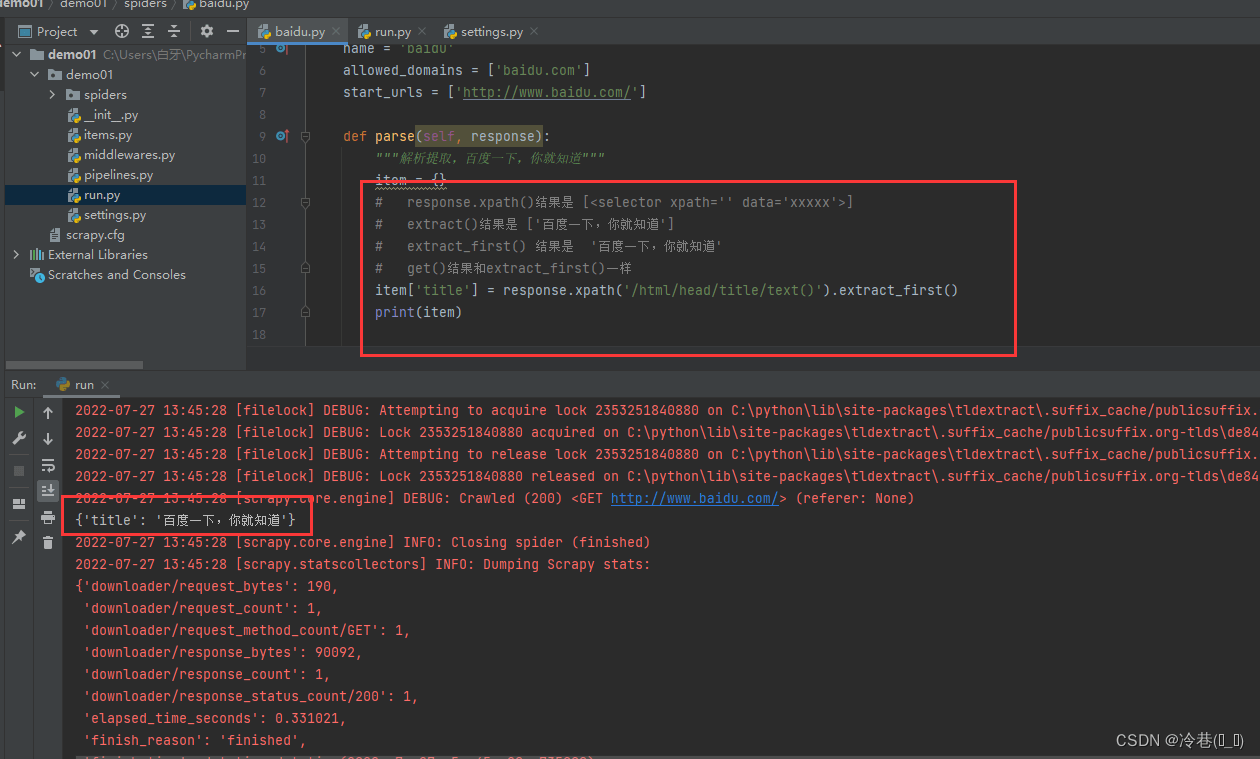

回到刚刚的问题,

然后运行一下

接着修改

神龙|纯净稳定代理IP免费测试>>>>>>>>天启|企业级代理IP免费测试>>>>>>>>IPIPGO|全球住宅代理IP免费测试